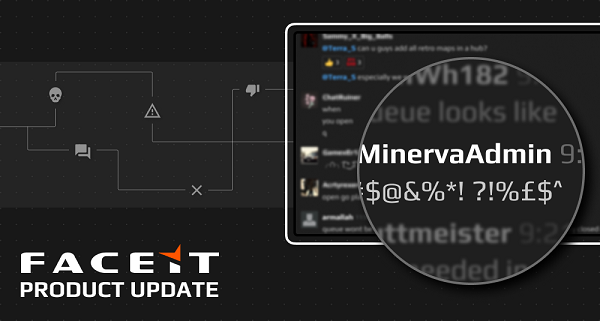

In a blog post by Maria Laura Scuri, it is revealed that FACEIT has been testing and has now officially launched Minerva, an admin AI trained through Machine Learning to identify toxic behaviour in CS:GO in partnership with Google.

Since the inital testing of Minerva in late August, there has been a 20% reduction in toxic messages.

The decision to use AI comes as FACEIT’s match volume makes it impossible for admins to be in the server for every game and toxic behaviour possibly slipping past a purely manual review system.

Taking on the role of a human admin, Minerva plays the same part through presence on the server by identifying toxic behavior, taking action against the players involved and giving feedback on what was wrong with their behaviour to give them a chance to fix it for the future.

Since August, over 200,000,000 chat messages were analysed, 7,000,000 messages were marked as toxic and as a result Minerva issued 90,000 warnings and 20,000 bans for verbal abuse and spam for in-game messages and direct conversations between users.

In addition to dealing with toxicity, FACEIT have been taking steps to counter the most toxic users as well as cheaters, smurfs and boosters using the platform.

Accounts flagged as suspicious were required to verify their phone number. This SMS verification was tested with over 250,000 accounts and 50,000 were blocked before being able to play in the last two months.

The blog states that there is a plan ‘to make this feature available to Organizers to allow them to restrict access to tournaments and hubs to users without a verified phone number’ in the future.

This comes after pros have become more vocal against players banned on one third-party platform playing suspiciously in qualifiers on a rival platform. An infamous user known as ‘holmyz’ was already banned on FACEIT before entering other qualifiers and other platforms taking similar measures could prevent those later found to indeed be hacking on ESEA as well from entering the competitive space in the first place.